In-Short

- NVIDIA launches Dynamo, an open-source AI inference software to boost AI factories’ efficiency.

- Dynamo aims to maximize token revenue generation and performance across GPU fleets.

- The software introduces disaggregated serving and intelligent routing to optimize GPU utilization.

- Enterprises and AI innovators are expected to adopt Dynamo for enhanced AI inference capabilities.

Summary of NVIDIA’s Dynamo Launch

NVIDIA has introduced a new open-source software called Dynamo, designed to enhance the performance and scalability of reasoning models within AI factories. This innovative software is set to replace NVIDIA’s previous Triton Inference Server, offering advanced features to maximize token revenue generation. Dynamo’s disaggregated serving technique optimizes the processing and generation phases of large language models (LLMs) by allocating them to separate GPUs, leading to increased utilization and performance.

Jensen Huang, NVIDIA’s CEO, emphasizes the importance of custom reasoning AI and the role of Dynamo in serving these models at scale. The software has already shown promising results, doubling the performance and revenue of AI factories and significantly increasing token generation per GPU.

Dynamo’s dynamic GPU allocation, smart routing, and efficient memory management contribute to its ability to adapt to varying request volumes and types, ensuring cost-effective operations. Its compatibility with popular frameworks and the open-source approach encourages widespread adoption and innovation in AI inference serving.

Major cloud providers and AI innovators are expected to leverage Dynamo for its distributed serving capabilities and low-latency communication libraries. Companies like Cohere and Together AI plan to integrate Dynamo to scale advanced AI models and optimize resource utilization.

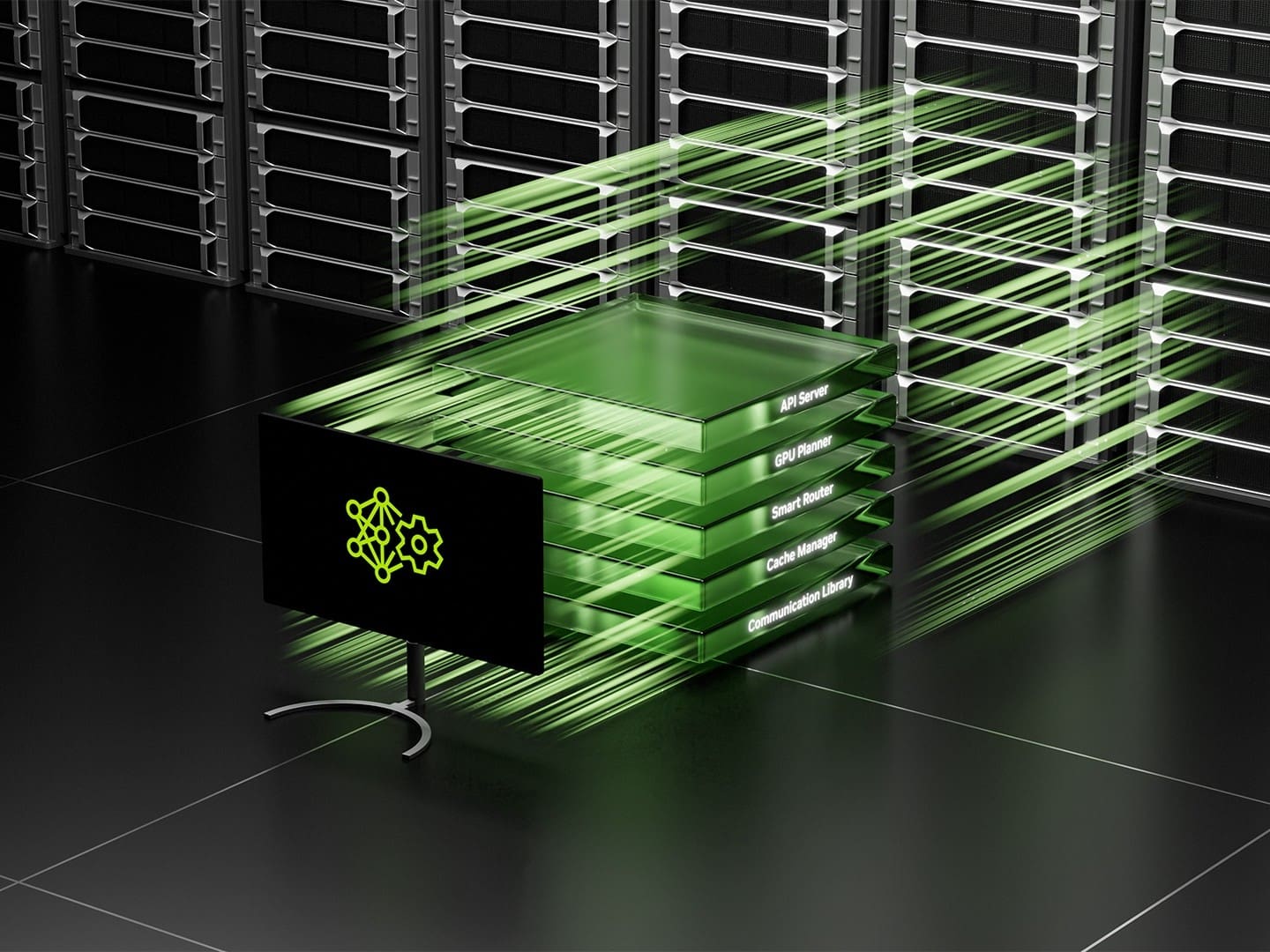

NVIDIA highlights four key innovations within Dynamo: GPU Planner, Smart Router, Low-Latency Communication Library, and Memory Manager. These features collectively reduce costs and enhance user experiences by managing resources efficiently and accelerating data transfers.

Dynamo will be available within NIM microservices and supported in a future release of NVIDIA’s AI Enterprise software platform.

Explore More

For a deeper dive into NVIDIA’s Dynamo and its impact on AI inference, visit the original source.